In the United States, there is a growing focus on AI risk management and the need for responsible and ethical AI practices. Governments and regulators are taking action to address the potential harms caused by AI and algorithms.

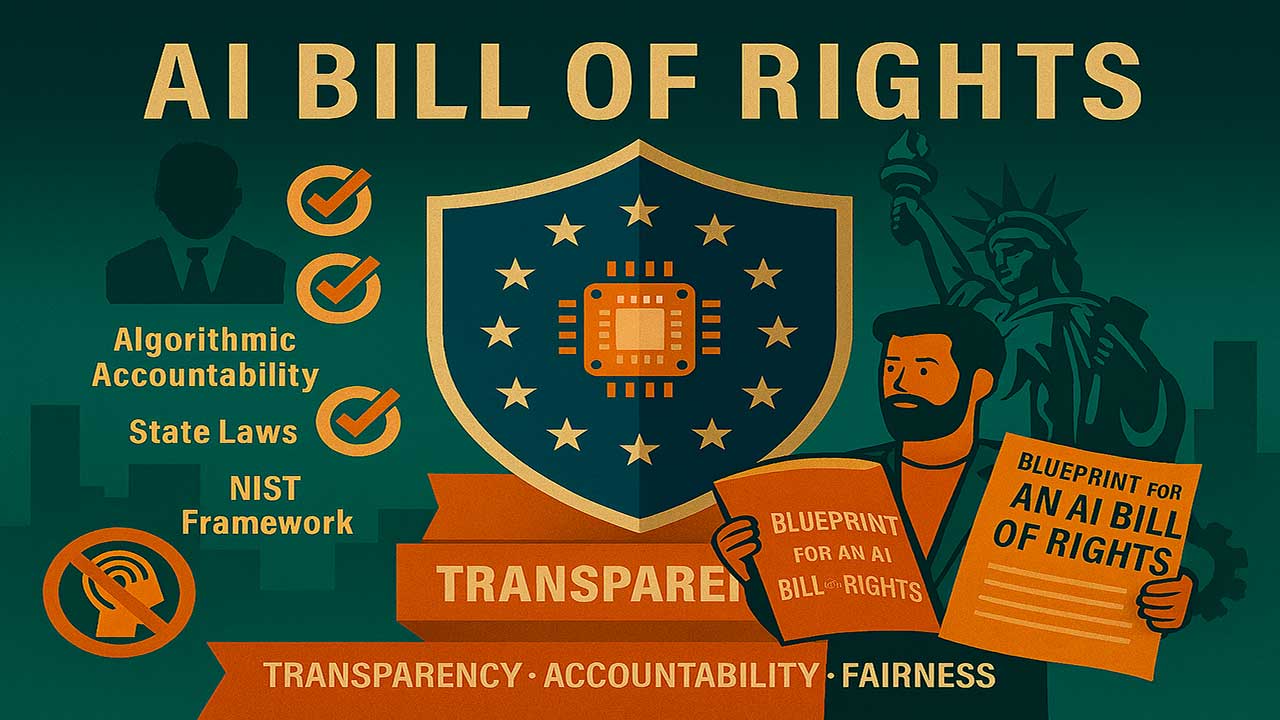

Leading the charge, the U.S. federal government has proposed the Algorithmic Accountability Act, while the National Institute for Standards and Technology (NIST) has developed an AI Risk Management Framework. Additionally, several states have passed or proposed laws to regulate AI use in specific areas, such as video interviews, bias audits, and insurance decisions.

The White House Office of Science and Technology Policy has recently published the Blueprint for an AI Bill of Rights, which outlines five principles to guide the design, use, and deployment of AI systems. These principles cover important aspects like system safety, algorithmic discrimination protections, data privacy, notice and explanation, and the availability of human alternatives.

To support organizations in implementing these principles, the White House has also released 'From Principles to Practice,' a technical companion document.

These efforts reflect a collaborative approach involving researchers, technologists, advocates, and policymakers to ensure the responsible development and use of AI. By fostering transparency, accountability, and fairness, the United States aims to set a positive example for AI governance worldwide.