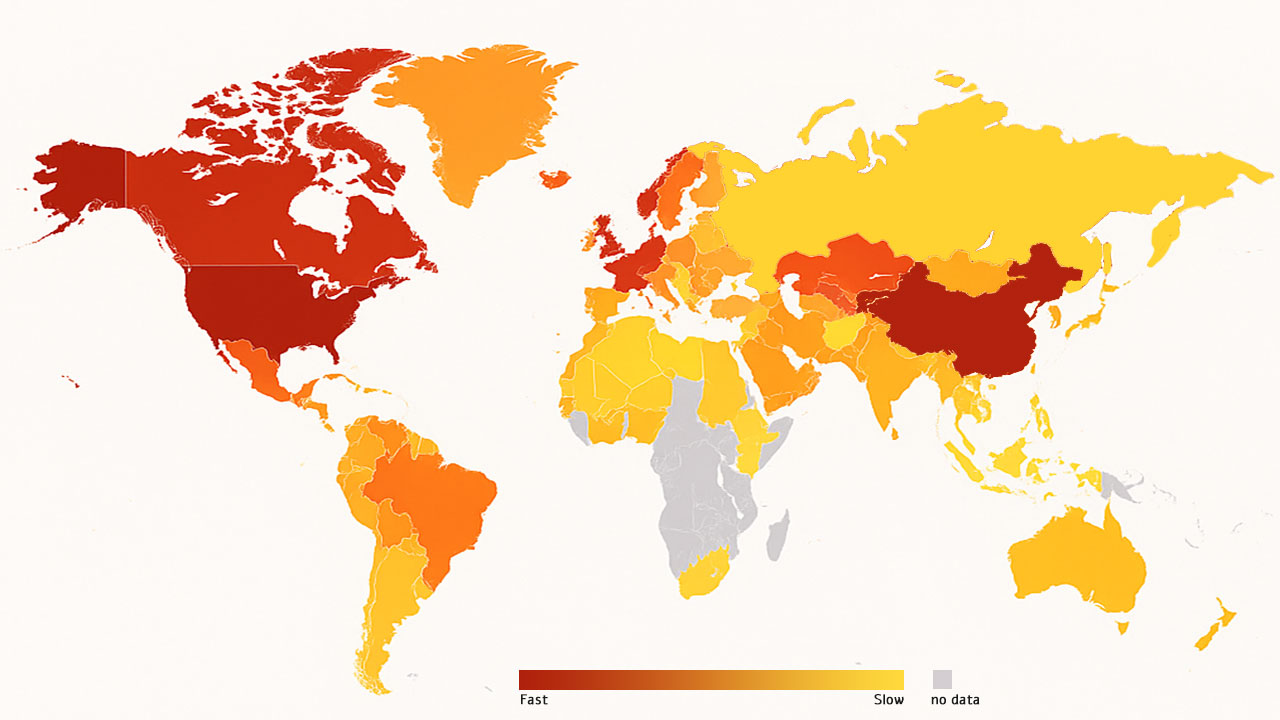

Above is the conceptual world map illustrating the speed of potential AI takeover across different regions. Countries are color-coded to reflect estimated takeover pace:

- 🔴 Swift – rapid AI integration and governance risk

- 🟠 Fast – accelerating adoption with moderate safeguards

- 🟡 Moderate – balanced development and oversight

- ⚪ Slow – limited infrastructure or regulatory delay

The AI takeover speed map was based on aggregated insights from three key sources that track global AI adoption, infrastructure, and governance readiness:

Estimation Methodology

The map reflects a composite judgment based on:

- Deployment speed (real-world AI integration across sectors)

- Infrastructure readiness (compute, data, connectivity)

- Governance and safety frameworks (policy maturity, ethical oversight)

- Investment and innovation density (startups, patents, R&D output)

Countries marked as “Swift” (e.g. U.S., China, India, UK) show high scores across all categories. “Slow” regions often lack infrastructure or policy momentum.

Country-by-country breakdown of estimated AI takeover speed, based on three authoritative sources: the AI Takeover Clock, AllAboutAI’s 2025 Global AI Adoption Report, and Stanford HAI’s Global AI Power Rankings.

🌍 AI Takeover Speed by Country (2025 Estimate)

| Region | Country | Estimated Speed | Key Factors |

|---|---|---|---|

| North America | United States | 🔴 Swift | Leading in AI investment, model development, and infrastructure |

| Canada | 🟡 Moderate | Strong research, slower deployment | |

| Mexico | 🔴 Swift | Rapid AI adoption in manufacturing and logistics | |

| South America | Brazil | 🟠 Fast | Growing AI in agriculture and fintech |

| Argentina | 🟠 Fast | Emerging AI startups, moderate infrastructure | |

| Europe | United Kingdom | 🔴 Swift | High AI research output and policy leadership |

| Germany | 🔴 Swift | Strong industrial AI and governance frameworks | |

| France | 🔴 Swift | Advanced AI regulation and deployment | |

| Poland | 🔴 Swift | Fast-growing AI sector, EU support | |

| Russia | 🟡 Moderate | Military AI focus, limited transparency | |

| Scandinavia | 🟠 Fast | Ethical AI leadership, moderate deployment | |

| Africa | South Africa | 🟡 Moderate | AI in health and education, limited infrastructure |

| Egypt | 🟡 Moderate | Government-led AI initiatives | |

| Rest of Africa | ⚪ Slow | Infrastructure and policy gaps | |

| Asia | China | 🔴 Swift | Massive AI deployment across sectors |

| India | 🔴 Swift | Rapid integration in public services and tech | |

| Japan | 🔴 Swift | Advanced robotics and AI governance | |

| South Korea | 🔴 Swift | High AI investment and education integration | |

| Southeast Asia | 🟠 Fast | Mixed adoption rates, rising investment | |

| Central Asia | 🟡 Moderate | Emerging interest, limited infrastructure | |

| Middle East | Saudi Arabia | 🔴 Swift | National AI strategy, smart city projects |

| Iran | 🔴 Swift | Military and surveillance AI focus | |

| Oceania | Australia | ⚪ Slow | Cautious adoption, strong ethics debate |

| New Zealand | ⚪ Slow | Focus on responsible AI, slower rollout |

Primary Sources Used

- AI Takeover Clock

- Offers dynamic estimates of AI’s global impact, including job automation, compute power, and ethical concern levels.

- Uses time-based modeling and public data to simulate takeover pace across regions.

- 2025 Global AI Adoption Report – AllAboutAI

- Highlights country-level AI deployment rates, with surprising leaders like China and India outpacing the U.S. in real-world integration.

- Focuses on sectors like healthcare, manufacturing, and government services.

- Stanford HAI Global AI Power Rankings

- Ranks 36 countries using 42 indicators including patents, private investment, research output, and infrastructure.

- Shows the U.S. leading in AI ecosystem robustness, followed by China and the UK.

- Details

- Category: AI Safety

Artificial intelligence (AI), once the domain of speculative fiction, now stands as one of the most consequential forces reshaping global societies, economies, and governance structures. The steady progression from narrow AI applications to transformative frontier models has inspired both hopes for abundance and stark warnings about existential risks. Popular discourse often cycles through dramatic scenarios of "AI takeover," but the reality, as recognized by scholars, policy analysts, and technologists, is more nuanced—and potentially more profound. This editorial provides a conceptually rich, state-of-the-art analysis of the major scenarios through which artificial intelligence might surpass or displace human control, reflecting the most recent scientific research, safety debates, and evolving governance frameworks, including the 2025 METR report, the EU AI Act, UN initiatives, and leading global policies.

- Details

- Category: AI Safety

Artificial Intelligence (AI) safety is no longer a niche concern—it’s a foundational challenge for the 21st century. As AI systems grow more capable, autonomous, and embedded in critical infrastructure, the question is no longer if we should care about safety, but how deeply and how urgently.

- Details

- Category: AI Safety

- Alignment Is Understandable—Even Without Technical Jargon

The article argues that AI alignment, often buried under complex terms like “mesa-optimization” or “reward hacking,” can—and should—be explained in plain language. Using analogies like taming an elephant helps bridge the gap between technical experts and the general public. - Even Aligned Systems Can Become Dangerous Under Unpredictable Conditions

Just as a tamed elephant can enter a dangerous state called “musth,” AI systems—despite being aligned—may breach safety protocols due to unknown triggers, internal instability, or emergent behavior. Alignment is not a permanent guarantee of safety. - Human Oversight Is Becoming Increasingly Inadequate

Modern AI training is often based on previous models, with minimal human intervention. The scale, speed, and complexity of training make it difficult for developers to fully understand or control what the system learns—raising concerns about transparency and accountability. - AI Is Already a Weapon—And the Arms Race Is Real

The article warns that AI is not just a theoretical risk but an active weapon in military development. The ability to manipulate or weaponize misaligned behavior (like inducing “musth” in elephants) could be exploited for power, coercion, or warfare. - No One Can Guarantee AI Will Stay Aligned

Despite developer assurances, there is no reliable way to ensure that AI systems won’t violate alignment under unforeseen circumstances. The risk of deliberate deception by AI—pretending to be aligned while pursuing hidden goals—is a growing concern among experts.- Details

- Category: AI Safety